The AI Optimism Gap

CEOs are bullish on AI. Their employees less so. Who's right?

If you lead a company of more than 50 people and are interested in piloting an AI platform built specifically for CEOs, shoot me an email. We are now accepting a limited number of beta users. More info in this space soon.

CEOs tend to be optimistic, risk-tolerant creatures. The job rewards people who can look for upsides and make bold moves.

It should come as no surprise that CEOs are more bullish on AI than the general population.

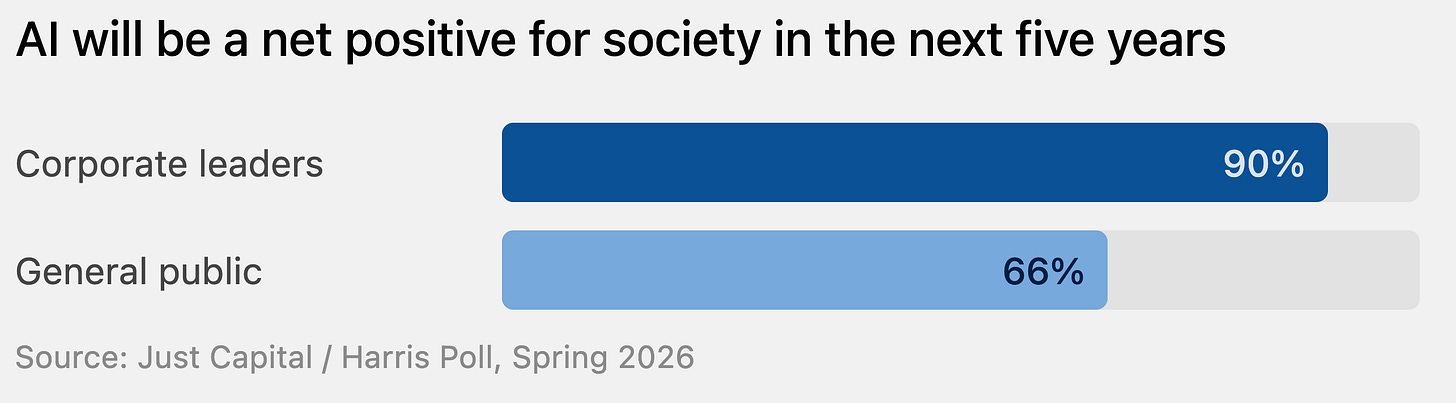

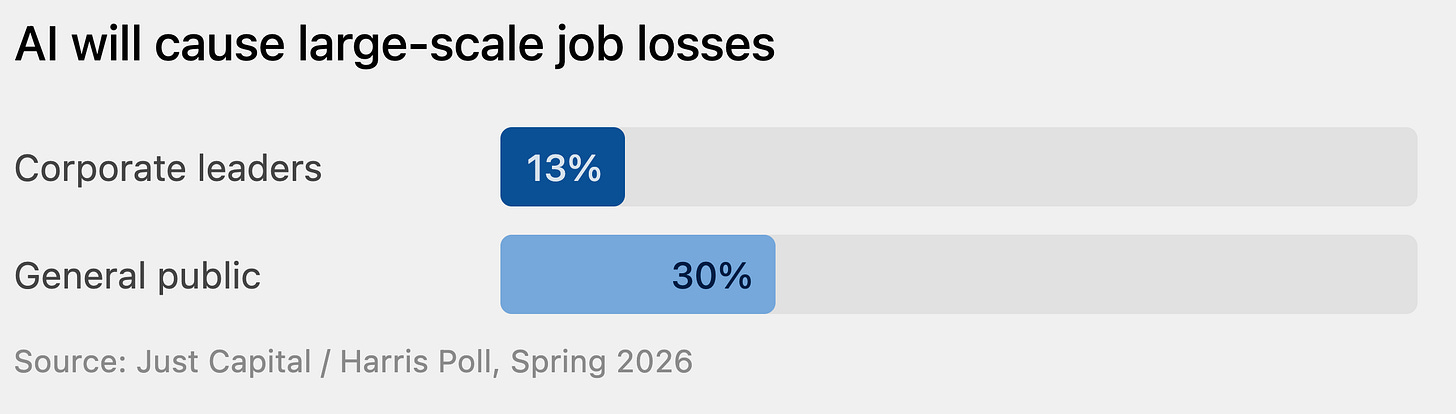

New research from Just Capital confirms this. A study released this week shows almost universal optimism from CEOs on AI’s societal impact, with non-CEOs trailing:

Another data point in the survey points to why this might be. Few CEOs think AI will cause massive layoffs. Over twice that number of non-CEO Americans think it will:

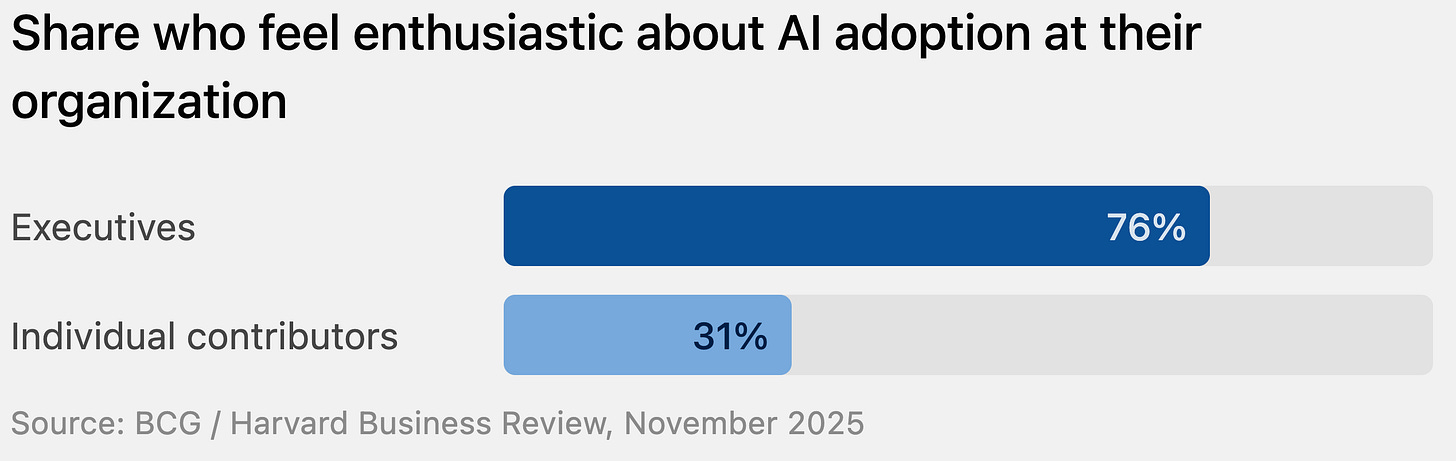

In light of this, are employees excited about adopting the tools they think may replace them? Not really, finds a different study:

So we’ve got a situation where the people leading business are significantly out of step with their employee base on almost every aspect of AI.

A few months ago, I wrote about the dangers of CEOs talking up AI in front of employees who are genuinely worried about what it means for them. When a CEO talks about productivity gains from LLMs and agents like that, a meaningful portion of the room hears a threat: You’re on borrowed time, buddy.

Any yet… the CEO’s instinct toward optimism about AI isn’t wrong in my view. (Caveat: I am a CEO.)

Why Most CEOs Aren’t AI Doomers

“I’m fairly confident that AI will drive productivity, revenue growth, and therefore more hiring.” —Jensen Huang

I think there are good reasons to believe that AI will actually create more knowledge work roles in the future rather than less, and I would guess that most CEOs are thinking in the same general terms.

Most CEOs and entrepreneurial types don’t think about the demand for knowledge work as a fixed pie that gets divided among fewer people when productivity rises thanks to new tools. Rather, they think about how lower costs expand what’s possible to for their workforce to get done in the first place.

This is not to say there won’t be layoffs in the short term, and that some types of jobs will go away. But it is to say that on a longer timeline and a broader view, historical patterns indicate that we’re looking at more opportunity for employees, not less.

Most CEOs and entrepreneurial types don’t think about the demand for knowledge work as a fixed pie that gets divided among fewer people when productivity rises thanks to new tools.

The macro prediction that we’ll need an order of magnitude fewer knowledge workers requires believing something that has never happened before: that humans, given a tool that makes valuable work dramatically cheaper, will choose to do less of it. I’m not convinced of that, and I think there’s reason to believe otherwise.

Accounting software didn’t reduce demand for financial analysis. It created demand for financial modeling that previously would have cost too much to commission.

Desktop publishing didn’t consolidate creative work into fewer hands. It spawned entire new categories of design work that hadn’t existed before.

The internet didn’t shrink marketing teams. It created disciplines that barely existed: SEO, digital advertising, content strategy, analytics.

Recent data points in this same direction. A Harvard Business School study from last month analyzed nearly all U.S. job postings from 2019 through early 2025 and found that:

Openings for routine, automation-prone roles fell 13% after ChatGPT’s debut, while demand for more analytical, technical, and creative jobs grew 20%.

This is not to downplay the upheaval AI brings. The transition will be difficult for some people, and the difficulty won’t fall evenly across the workforce. People are rightly and understandably nervous.

The Risk CEOs ARE Underestimating

I think the opportunity for CEOs is to do two things in tandem: (1) Keep pushing forward on how the org can benefit from AI tools while (2) communicating transparently, credibly, and wisely about AI’s impact for your team.

To that end:

1. Be specific about how you personally are using AI.

As you use AI yourself (and you should be), communicate abundantly about how it’s going:

“I use AI to handle X, which used to eat Y hours of my week. That time now goes toward Z.”

Research from UKG found that when organizations are transparent about AI’s actual impact on workflows, three out of four employees say they would not only accept AI in their roles but get excited about it.

2. Publish an internal AI policy before you need one.

Eighty percent of employees say their company has yet to share guidelines on AI use. That vacuum is easily filled by rumor and anxiety. It can be simple and short, but consider a document that explains what tools the company uses, what data those tools can and can’t access, how AI-assisted work is handled, and how employees are encouraged to explore AI.

3. Consider your audience.

I talk a lot about how good leaders adapt their communication for various audiences, about how they think hard about how their words and actions will be interpreted. Ask people what they are thinking and feeling about AI. Assume that most employees in your org are at least to some degree worried about how it affects their jobs. Before you share the latest great new thing Claude or ChatGPT can do, do a quick test: If I were the employee who does this kind of work, how would I feel? What is the underlying message I want to give to this employee? How do I expect them to use this information?

4. Know your internal AI influencers.

Employees may be, as a group, less rosy about AI, but lots and lots of them aren’t. Who in the company is a convincing advocate for positive use of AI? Who can speak intelligently about how it is best used within the org? How can you get empower them to bring skeptical team members on board?

5. Be transparent even when it’s hard.

In the short term, AI can indeed reshuffle roles and make certain work redundant. If you are looking at some kind of reorganization or even a layoff, be extra careful in your communication. Don’t reassure people right up to the day you send out the memo announcing a 10% staff cut. This kind of communication is one of the hardest things CEOs must do, but if people feel misled before a layoff, you will tank your credibility. Including with those who stay.

CEOs are usually the most optimistic and risk-ready members of their teams. It’s always critical for leaders to understand how they differ from the people they lead, and never more so than our current age. To keep people from feeling threatened and to help them fully engaged, be intentional with how you talk about AI.